Etsy Agent Ai

Launched Ai recommendation in Etsy’s agent tools to increase case accuracy and decrease review time through centralizing resources and reducing process steps

Background

Apollo is Etsy’s internal task management and workflow platform, Apollo, is designed to help teams handle member interactions, automate processes, and ensure quality assurance.

Within Apollo, the Financial Crimes team manually reviewed thousands of listings, per month, flagged by automated sanctions detection. Agents were overwhelmed by juggling multiple software tools and complexity of the task. This problem resulted in slow decision times, inaccurate outcomes, and inflated operational cost. Any wrong decision could have significant federal sanctions.

Etsy’s internal software was built on an aging monolithic stack. Rather than attempting to reconfigure the software architecture and complex page layouts, the team decided to experiment with Ai integration as a cheaper and more impactful approach to improve the experience.

My Role

As the Lead product designer for the APX team, I conducted moderated research and usability sessions, created research scripts, interactive prototypes, UI and UX design improvements. I collaborated closely with several PMs, product designers, engineers, and data analysts to define a product vision, road map, and testing metrics. Our team was able to launch 2 high impact iterations in a rapid timeline of under 90 days from idea to implementation.

Iterations & Feedback

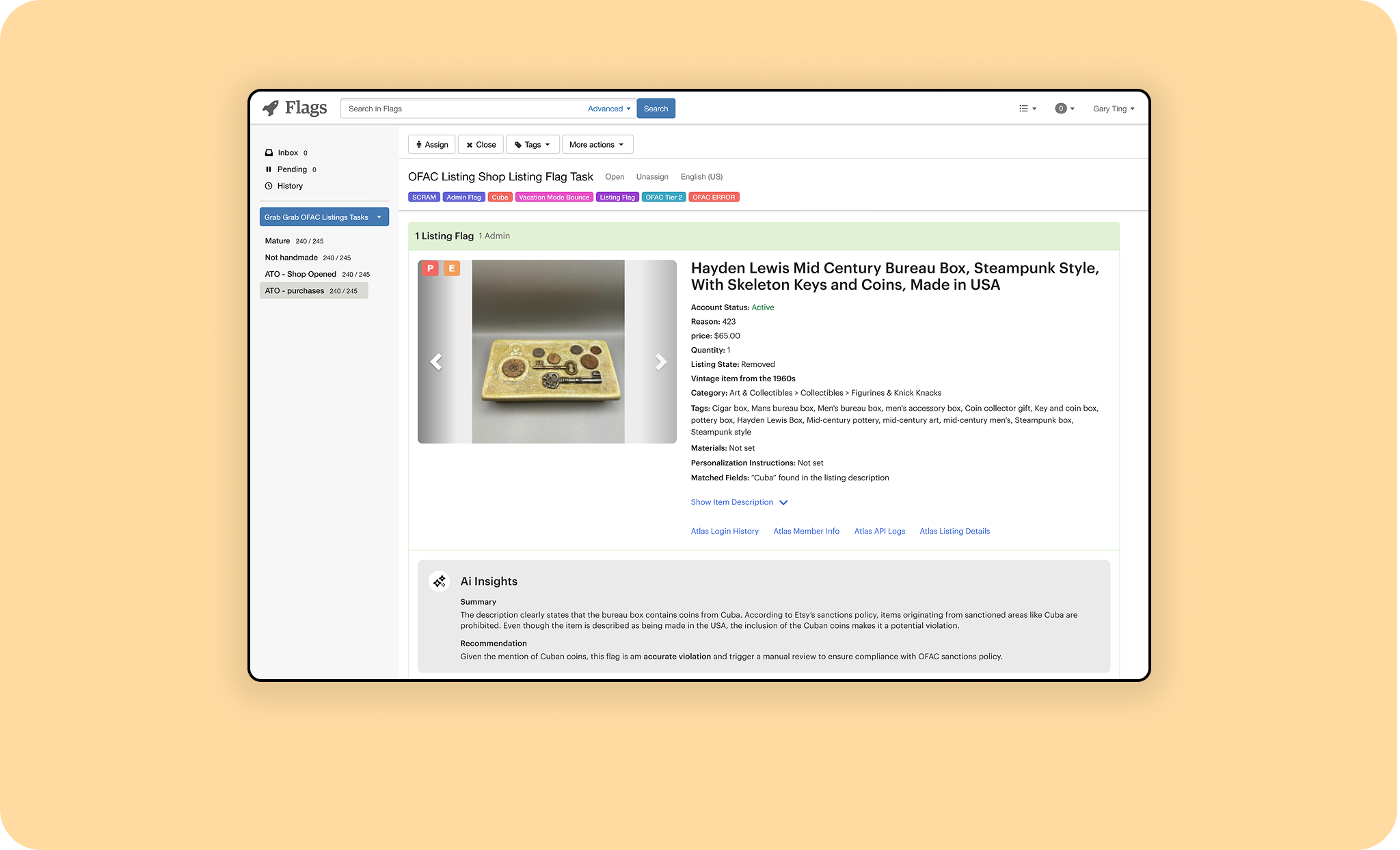

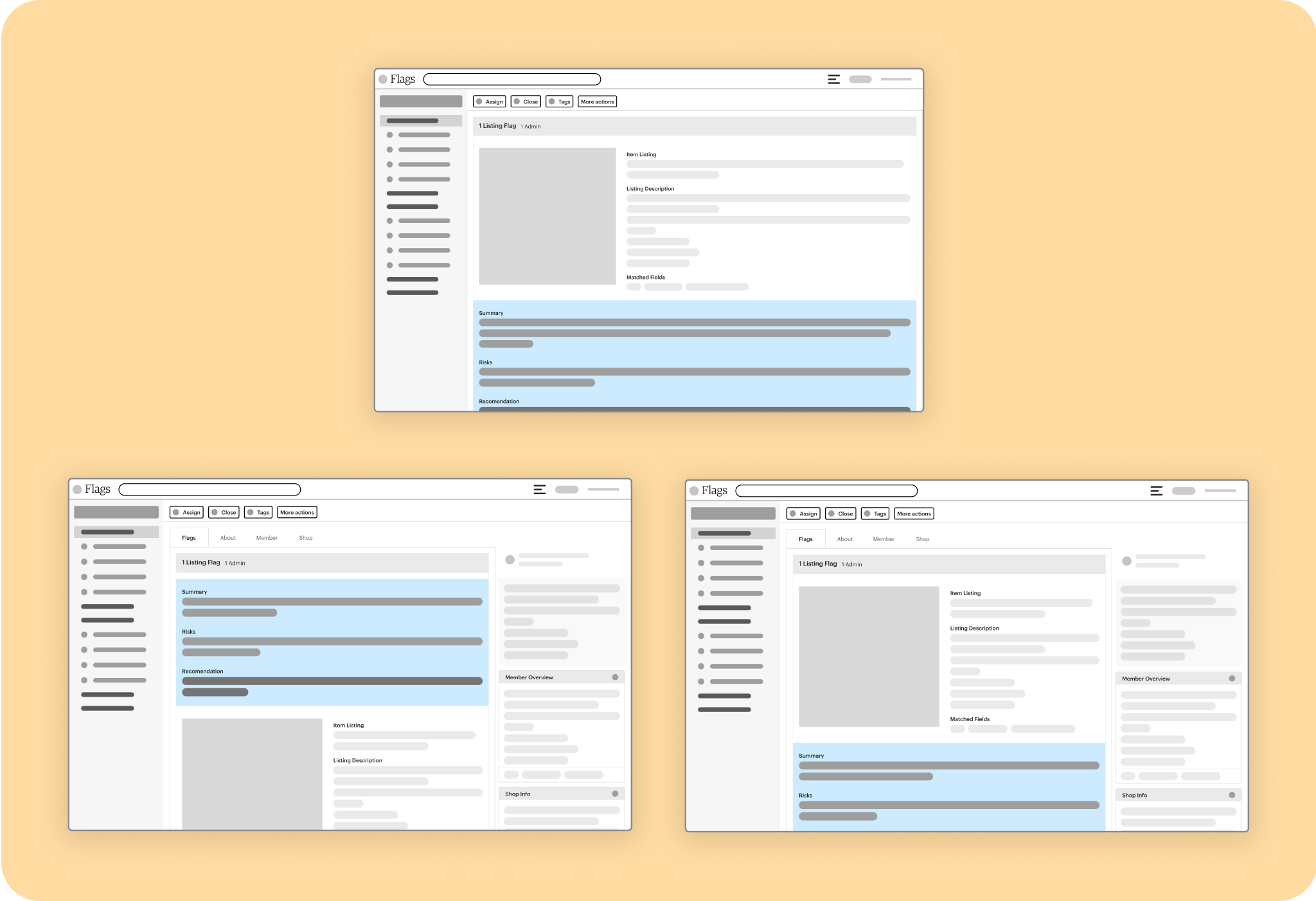

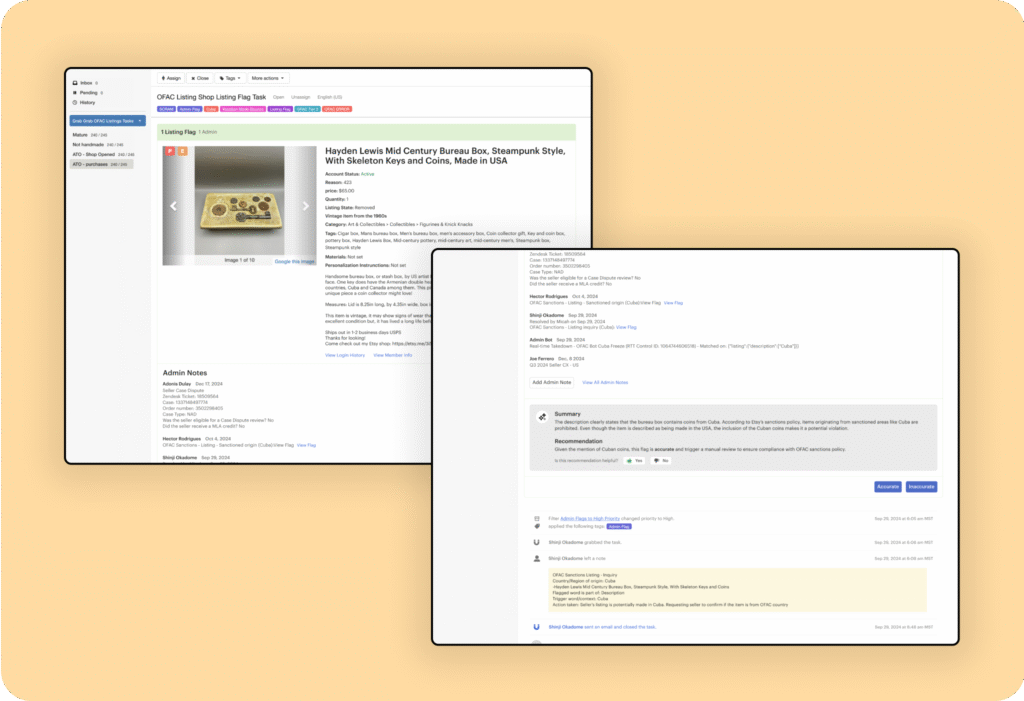

I began by auditing the existing OFAC review flow, mapping points of cognitive load and decision friction. I redesigned the task page to include new layout concepts exploring different positions to introduce the LLM recommendation. Agent managers recommended allowing the agent to review the overview before viewing the LLM recommendation to reduce the chance of influencing the outcome.

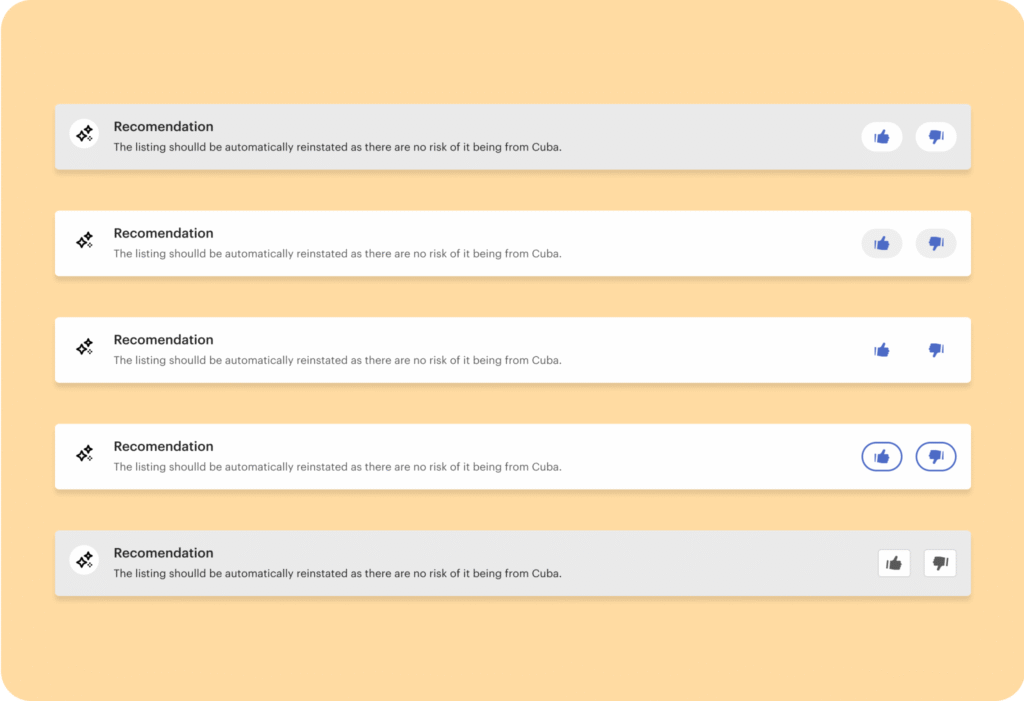

Due to the lack of qualitative data informing agent LLM integration I included a feedback element asking agents whether the LLM’s recommendation was helpful. Minimal changes could be done to the page layout, so clarity of communication was paramount.

With a prototype ready I created a testing script and recruited several participants. Participants were asked to demonstrate and walkthrough their current workflow and follow up by giving feedback on the new designs.

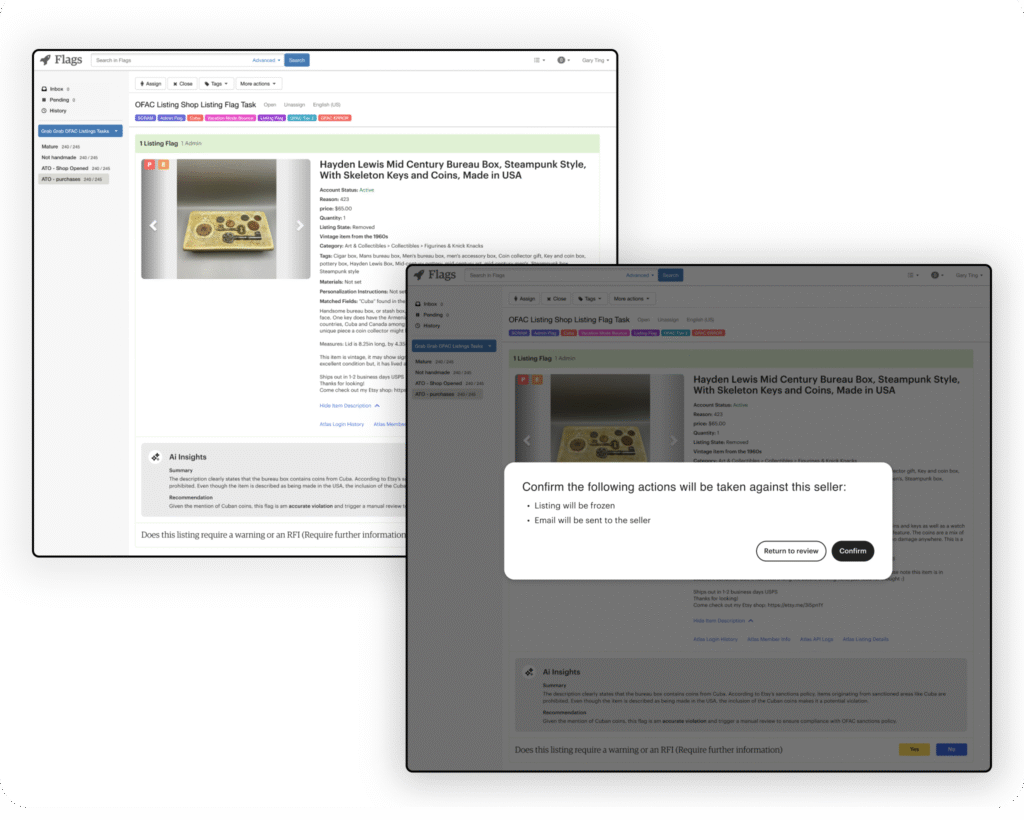

Highlights from the research session included a desire to incorporate Ai technology, and positive feedback on the layout improvements and workflow simplification. However, users still desired simple decision controls, and more context around the Ai integration feedback questions. Due to government compliance impacts, all designs had to be reviewed by legals for before deployment.

Because of the sensitive nature of sanctions data, only internal and enablement agents could participate in the study, reducing sample diversity and extending validation timelines.

During initial testing, the rating received 97% positive feedback in all sessions, indicating strong clarity and trust in the AI’s communication. Because the feature consistently scored high, it was removed in later design iterations to streamline the interface.

Decision outcome controls were simplified by adding an explicit question and binary control. The button colors were changed to add differentiation to the controls. Additionally, a modal dialogue was included to warn agents of the actions taken against the seller and reduce false positives.

Outcome

- Integrated Ai recommendation displaying a concise summary, risk rationale, and recommendation

- Increase in review accuracy from 82% to 95%

- Reduction interview time by 15%

- Established conversations with leadership about support Ai applications and Apollo information architecture refactor

Testing

- Item